Laser technology can see around corners and help rescue efforts

Researchers at Stanford University are developing laser technology that can produce images of objects that are not in a direct line of view, such as those hidden around corners.

The immediate goal is to create applications for autonomous cars, but lasers with this level of sensitivity could ultimately be used by rescue teams to search through foliage in aerial vehicles or to find people buried in debris.

Gordon Wetzstein is an assistant professor of Electrical Engineering and senior author of the study. “It sounds like magic but the idea of non-line-of-sight imaging is actually feasible,” he said.

This is not the first team to develop techniques for bouncing lasers around corners. What makes this new research significant is that scientists at Stanford have produced a more effective algorithm for capturing the images of unseen objects.

“A substantial challenge in non-line-of-sight imaging is figuring out an efficient way to recover the 3D structure of the hidden object from the noisy measurements,” said study co-author David Lindell. “I think the big impact of this method is how computationally efficient it is.”

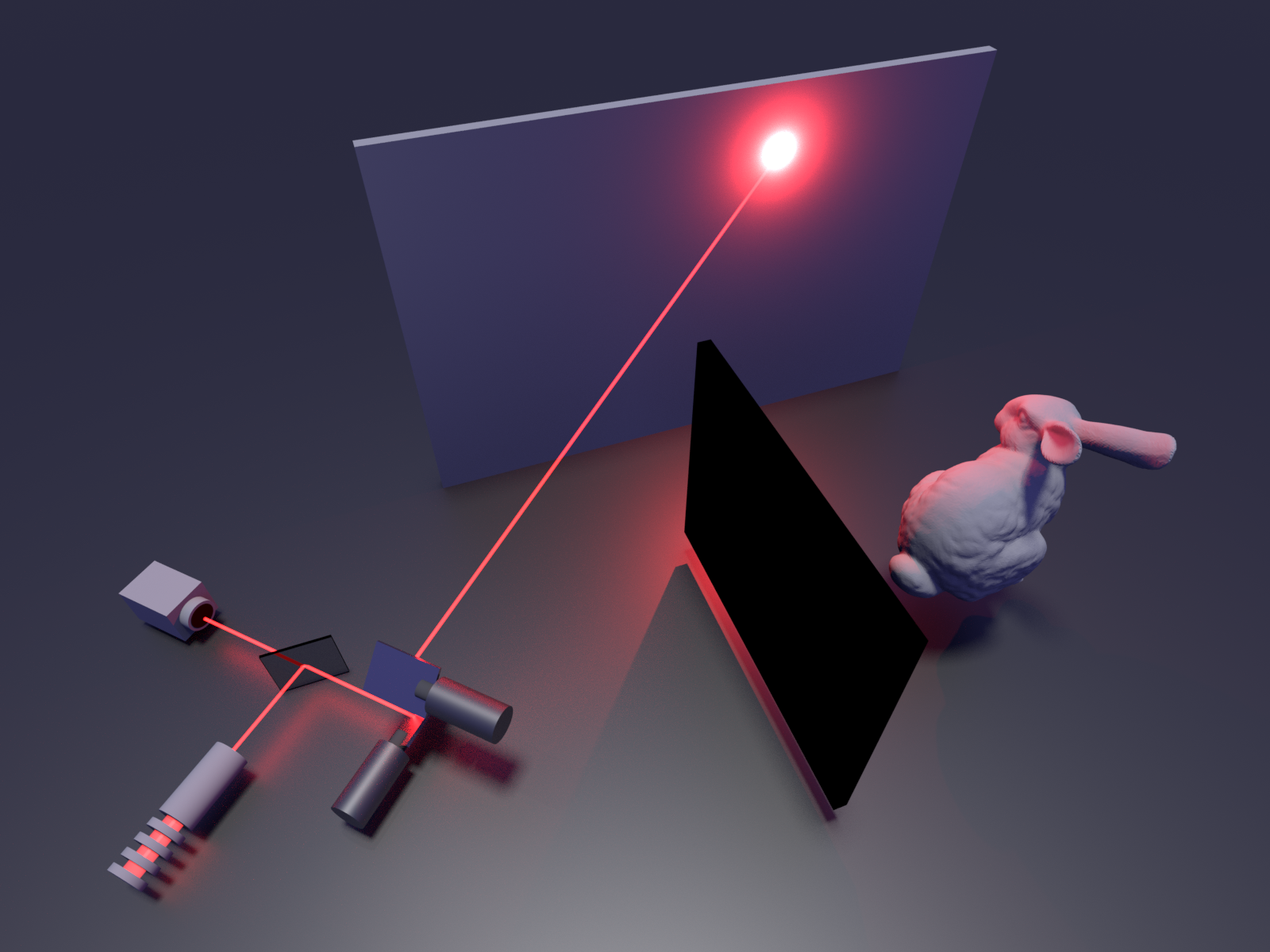

The researchers took a highly sensitive photon detector, which is capable of recording even a single light particle, and placed it next to a laser. They shot beams of light at a wall that subsequently bounced off objects around the corner and traveled back to the wall and also to the detector.

The scanning process currently takes anywhere from two minutes to an hour, depending on the light conditions and the reflectivity of the hidden entities.

After the reflection of an object is captured, the algorithm used by the team can reconstruct the original paths of light particles to sharpen the image. Enhancement of the image takes less than a second, and the researchers believe that it will eventually be instantaneous.

There are some adjustments that still need to be made before the new imaging technology hits the road. For example, the technique relies on scattered light particles, which are ignored by the LIDAR systems that guide autonomous vehicles.

“We believe the computation algorithm is already ready for LIDAR systems,” said co-lead author Matthew O’Toole. “The key question is if the current hardware of LIDAR systems supports this type of imaging.”

The researchers are also working to get the system better adapted to various conditions of the real world, where the lighting and distance of objects can cause scanning issues. Furthermore, the imaging system must be modified to more effectively detect objects in motion.

“This is a big step forward for our field that will hopefully benefit all of us,” said Wetzstein. “In the future, we want to make it even more practical in the ‘wild.’”

The study is published in the journal Nature.

—

By Chrissy Sexton, Earth.com Staff Writer

Image Credit: Stanford Computational Imaging Lab