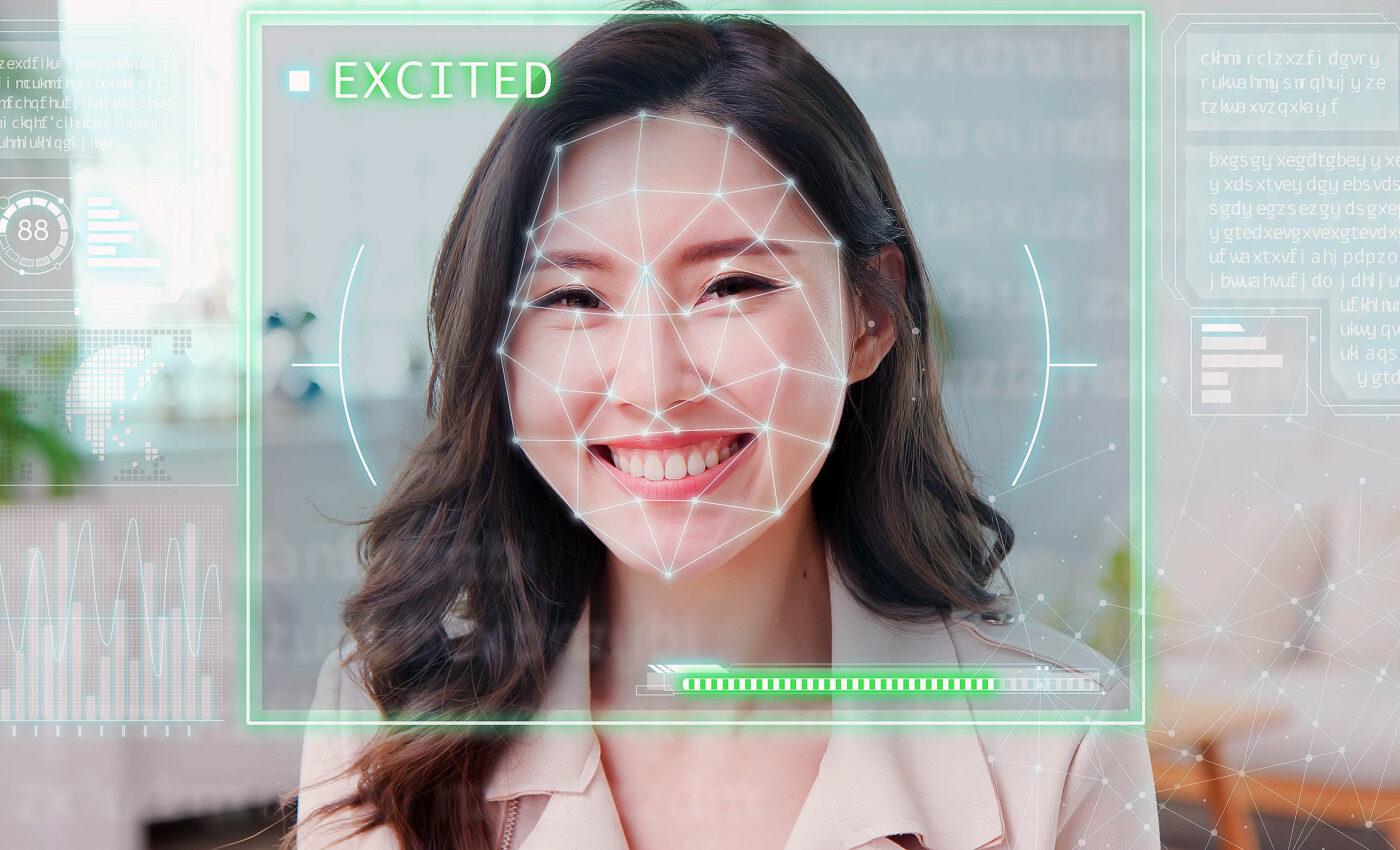

Wearable "PSiFI" AI tech device interprets emotions of any human nearby

In a significant technological breakthrough, scientists have unveiled a pioneering technology, named PSiFI, capable of recognizing human emotions in real-time.

This development from Professor Jiyun Kim and his team from the Department of Material Science and Engineering at UNIST is set to transform a variety of sectors by integrating emotion-based services into next-generation wearable devices, enhancing how we interact with technology on a profound level.

Historically, accurately discerning emotional states has been a formidable challenge due to the inherently abstract and multifaceted nature of human emotions, moods, and feelings.

Addressing this challenge, Professor Kim’s team has introduced a cutting-edge multi-modal human emotion recognition system. This system uniquely merges verbal and non-verbal cues, offering a holistic approach to understanding human emotions.

PSiFI: The heart of emotion recognition

At the heart of this innovative system is the Personalized Skin-Integrated Facial Interface (PSiFI) system. Distinguished by its self-sufficiency, simplicity, flexibility, and transparency, the PSiFI system incorporates a bidirectional triboelectric strain and vibration sensor.

This sensor is pivotal in concurrently capturing and processing both verbal and non-verbal expressions. Coupled with a sophisticated data processing circuit, the system facilitates seamless wireless data transfer, enabling the instantaneous recognition of emotions.

Empowered by machine learning algorithms, the technology showcases remarkable efficiency in recognizing human emotions accurately and promptly, even in scenarios where individuals wear masks.

Its practical application has already been demonstrated in a digital concierge service within a virtual reality (VR) environment, underscoring its versatility and potential for widespread adoption.

Science behind the PSiFI AI technology

The foundation of this PSiFI AI technology lies in the phenomenon of friction charging, which generates power through the separation of charges upon friction, eliminating the need for external power sources or intricate measurement devices.

Professor Kim elaborated on the development process, highlighting the use of a semi-curing technique for creating a transparent conductor crucial for the friction charging electrodes.

“Based on these technologies, we have developed a skin-integrated face interface (PSiFI) system that can be customized for individuals,” Kim explained.

A personalized mask, crafted using a multi-angle shooting technique, ensures the system’s adaptability, combining flexibility, elasticity, and transparency seamlessly.

Jin Pyo Lee, the lead author of the study, expressed optimism about the system’s capability to facilitate real-time emotion recognition with minimal learning steps and without the necessity for complex equipment.

“With this developed system, it is possible to implement real-time emotion recognition with just a few learning steps and without complex measurement equipment,” Lee said.

“This opens up possibilities for portable emotion recognition devices and next-generation emotion-based digital platform services in the future.”

Future possibilities and applications

This breakthrough heralds the advent of portable emotion recognition devices and paves the way for future emotion-based digital platforms.

The team’s experiments in real-time emotion recognition, involving the collection of multimodal data like facial muscle deformation and voice, demonstrated high accuracy with minimal training. The PSiFI system’s wireless, customizable nature promises easy wearability and convenience.

Expanding its application to VR environments, the system acts as a digital concierge in various settings such as smart homes, private movie theaters, and smart offices.

By identifying individual emotions, it offers personalized recommendations for music, movies, and books, showcasing its potential to create more intuitive and personalized user experiences.

Professor Kim emphasized the crucial role of Human-Machine Interface (HMI) devices in facilitating effective interactions between humans and machines.

“For effective interaction between humans and machines, human-machine interface (HMI) devices must be capable of collecting diverse data types and handling complex integrated information,” says Kim.

“This study exemplifies the potential of using emotions, which are complex forms of human information, in next-generation wearable systems.”

Next PSiFI steps: Harmonizing humanity and technology

In summary, Professor Jiyun Kim and his team at UNIST have propelled us into a new era of technological intimacy with their pioneering emotion recognition technology.

By seamlessly integrating verbal and non-verbal cues through the innovative PSiFI system, they’ve unlocked the potential for machines to understand human emotions in real-time, making it possible for more empathetic and personalized digital interactions.

This fascinating technology marks a significant leap in human-machine interfaces and opens the door to a future where technology can adapt to our emotional states, enhancing our daily lives in ways we’ve only begun to imagine.

As we stand on the brink of this exciting frontier, the possibilities for deepening our connection with the digital world are limitless, promising a future where technology truly understands us.

The full study was published in the journal Nature Communications.

—–

Like what you read? Subscribe to our newsletter for engaging articles, exclusive content, and the latest updates.

—–

Check us out on EarthSnap, a free app brought to you by Eric Ralls and Earth.com.

—–